Programming Virtual Sensation

If the Metaverse wants to truly claim the title of a ‘Virtual Reality’, it will need to go far beyond just world-building. Most of the work in the VR space has been focused on graphics — their realism, frame rate, and style. However, reality is far more than just the visual.

To truly feel like you’re in a city or a meadow, you need to be able to hear either the traffic or the birdsong, from far and near, smell the metal or the flowers, and feel the breeze on your body. These are the abstract and ephemeral challenges the tech industry faces when building a true virtual reality. These are not problems solved by computing power but by creativity, innovation, and a truly staggering amount of physics.

Here’s how Metaverse developers are responding to this challenge:

Touch

Perhaps the most important piece of the sensation puzzle in the Metaverse is touch. As the term ‘physical reality’ implies, touch is the main difference between it and virtual reality. Without touch, it is difficult to feel truly immersed or grounded in the world, and most importantly, it limits interactivity.

Imagine the difference between selecting an object on a screen, versus grabbing it and feeling it in your hand — the material, its pressure and temperature. Imagine running your hand through a virtual river and not being able to tell the difference. This is the goal of VR developers.

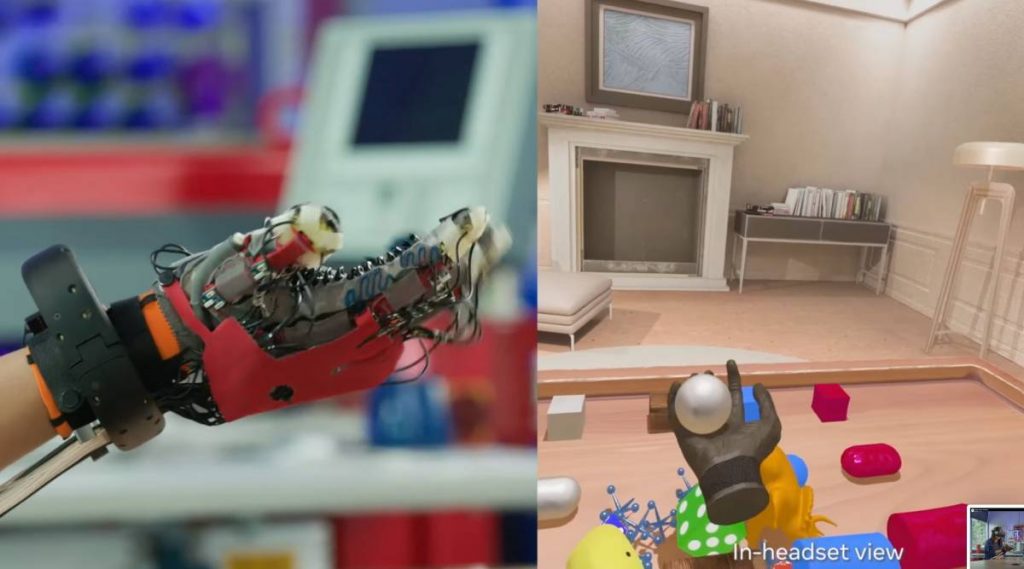

Haptic Gloves

The most obvious solution to the problem of touch is some kind of electronic glove. A glove that can track the hand in the metaverse, see what objects it is interacting with and produce a touch output that matches the material and its feel. However, the technological challenges are numerous and at the still cutting edge of engineering.

Wearability

The glove would need robotic elements but with current electronic circuits and cooling mechanisms, it would become too hot and bulky to be practical. This is why some labs are turning to soft robotics. These use flexible, soft motors instead of metal components. This and other innovations in material sciences and component optimisation make systems smaller, more lightweight, and seamless.

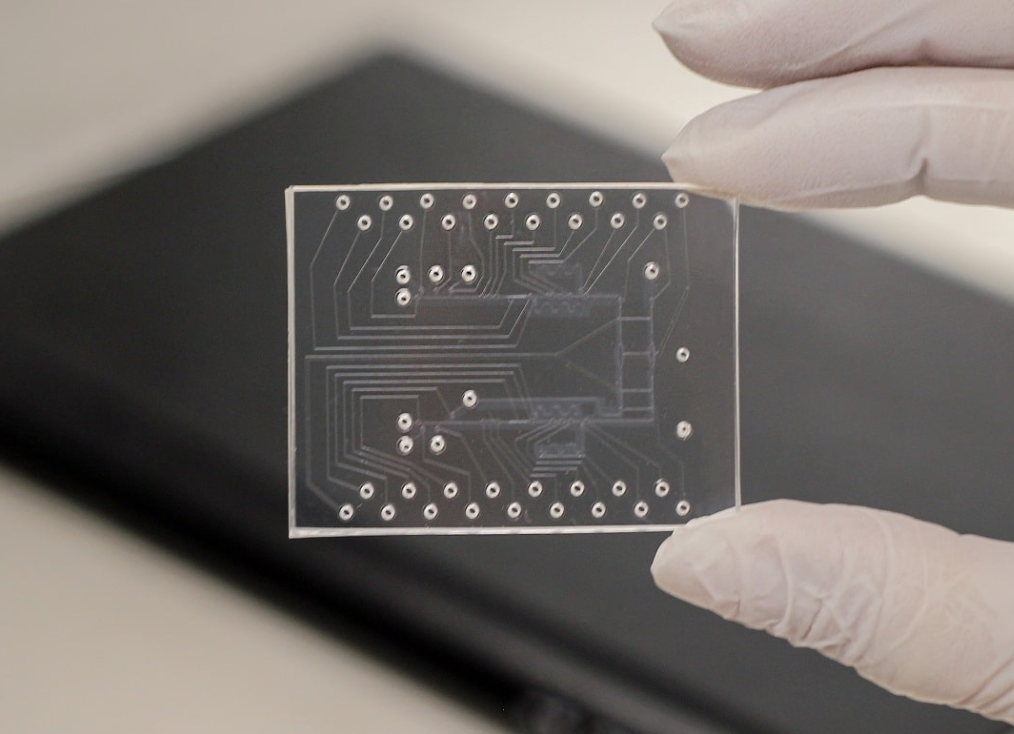

Labs are also working on developing groundbreaking micro-fluidic chips. These use air instead of electric currents to control the moving components. Not only does this make the system smaller and more efficient, it also stops it from ever overheating.

Touch Response

Any haptic device, glove or otherwise would need a way to take impulses, interpret them, and respond with accurate tactile feedback. This would include things like material, texture, temperature, pressure etc. To accomplish this, labs have gone two ways. The main approaches currently are based on either soft motors or microbubbles with both responding to airflow as described above. The motors move and the bubbles inflate and deflate rapidly to mimic sensations.

Physics Engine

Although ‘rendering’ is mainly thought of in relation to graphics, haptic information also has to be rendered from software to the glove. This means creating a ‘physics engine’ that uses theories like fluid dynamics, force, and materials science to approximate what an object would feel like. This requires creating a database for haptic information just like one would create a graphics library. The engine has to sync with the glove’s moving parts to ensure a completely seamless experience from virtual to physical reality.

Although the challenges involved are great, so are the potential uses of the technology. Imagine using simulators such as clay or paint simulators to create art and sculpture in the metaverse and feel its shape change under your hands.

This presents a new opportunity to many industries that haven’t been served by digital technology yet. Fields that are more delicate or involve working with your hands now have the freedom to turn virtual too. Even in other fields, the simple feeling of being able to hand over an object, or to feel a keyboard tapping under your hands will make virtual collaboration much closer to the ‘real thing’.

EMG accessories

This one’s a little more out there. A more speculative technology trying to solve the touch problem is ‘electromyography’. This is a non-invasive device, such as a wristband that detects electrical signals in your hand to predict how you’re going to move it. This means the device, and therefore the virtual reality it is connected to can sense and respond to your intent rather than your action.

EMG wristbands, for example, could be used to create virtual ‘clicks’ that allow you to intuitively select, move, and alter objects in the virtual environment. The idea, just like with the haptic gloves is to create a seamless experience that doesn’t feel laggy or distant.

With EMG inputs and haptic outputs, tactile technology could be combined very soon to create consumer products that are lifelike beyond doubt.

Environment

On a more large-scale level, there is technology being developed that would make the metaverse a 4D experience in a more traditional sense. This would be in the form of wind/water generators that are either installed into large rigs or headsets to mimic the sensations of wind, breeze, temperature, humidity etc. While these may seem like relatively unimportant details, creating a virtual world is about tricking the human brain — which is not easy. Even the tiniest details that we never notice in daily life must be replicated so as to not break the illusion.

Smell

Another component of the real world we may not always realise the importance of is smell. However, it is precisely because we don’t think about it that smell is so powerful. Smell is not just another way we receive information about the world. It is deeply tied to our subconscious minds and plays a big role in our mood and understanding of a place and situation.

There are many traditional brands that already employ smell to great effect. Disney, for example, pumps its parks full of the comforting smell of vanilla extract to create a childlike atmosphere. Subway is famous for its smell of freshly baked bread that works to attract customers. In short, smell works. It is subliminal, invisible, and extremely powerful.

Brands are working on scent cartridges that work with VR goggles to mimic smells in the metaverse. Chemists synthesize familiar smells like pine or roses, and these cartridges are fed into the system. When they are triggered by an interaction in the metaverse, for example, picking a flower, the headset releases the corresponding scent as a mist or spray.

Creating a More Accessible World

These technologies may seem niche and even unnecessary, but when it comes to creating a virtual reality that is not the case. To make a virtual world rather than just an immersive video, the five sense must be incorporated. Without sensory input agreeing with the visual, immersion and interactivity are limited.

The applications of these new immersion tools are far-reaching. They are game-changing for various industries as they enable virtual collaboration and eliminate geographical barriers. However, their main benefit is for one of the most disregarded demographics in society — those with ability challenges. Immersive technology like this allows people with all sorts of illnesses, disabilities and limitations to participate in a world with social interaction, collaboration, and openness.

Making the metaverse more lifelike isn’t just about gaming, it’s about allowing people access to social worlds, about breaking down geographical barriers and bringing people together. Immersive technology, particularly haptic feedback has been shown to have a measurable positive impact on people’s mental health and self reported satisfaction. The metaverse proves that no barrier — whether physical, practical, or geographical — needs to be exclusionary.

If there’s one thing that Metaverse developers and the Web3.0 community at large have shown, it’s their ability to innovate. And innovation, even in one narrow field, ends up being good for everyone. Welcome to the future of reality, inclusivity, and accessibility.